Automatic speech recognition (ASR) is the task of converting human speech into the corresponding text by computers. ASR use cases include dictation systems, call routing, and virtual assistants, such as Siri and Alexa. Researchers from the Institute of Smart Systems and Artificial Intelligence (ISSAI) at Nazarbayev University (NU) have previously developed advanced ASR systems for the Kazakh language. Now, they have extended their work to a multilingual ASR model that can recognize ten Turkic languages—Azerbaijani, Bashkir, Chuvash, Kazakh, Kyrgyz, Sakha, Tatar, Turkish, Uyghur, and Uzbek. The developed model can identify in which of the ten Turkic languages an utterance was spoken and displays the text of the utterance on the screen. The model can also recognize English and Russian speech.

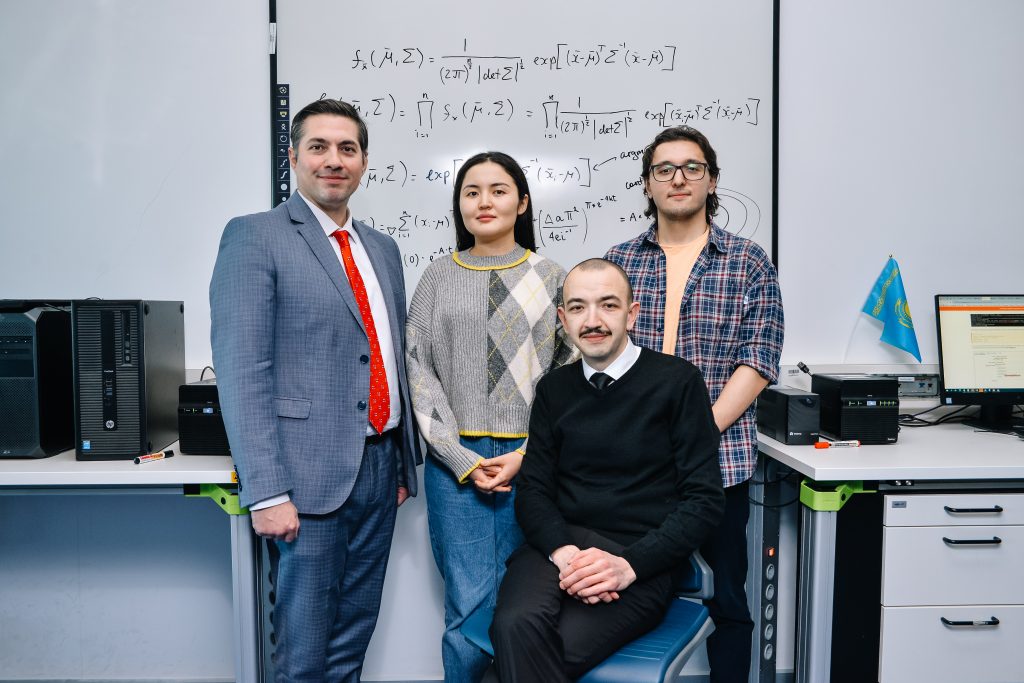

“Generally, the development of ASR models is directed toward languages that are well represented on the Internet and for which large amounts of speech data are publicly available, for example, English, Mandarin, or Japanese. Our aim was to develop an ASR model for Turkic languages for most of which very few such data exist,” says ISSAI data scientist Saida Mussakhojayeva. “By utilizing the common features of Turkic languages in terms of lexis, phonology, and morphology, we sought to develop a robust joint model in which the ten Turkic languages in our study would reciprocally benefit from each other”.

The performance of ASR models is normally evaluated in terms of the percentage of characters and words that are incorrectly predicted in the text of a spoken utterance.Thus, the closer this percentage is to zero, the more accurate the ASR model is and the fewer errors there are in the predicted text. For example, when recognizing a Chuvash utterance, the predicted text of ISSAI’s multilingual ASR model contains 4.9% of errors in characters and 17.2% of errors in words. “One can say that these results are still far from perfect, which is admittedly true. However, one should also keep in mind that monolingual models built for a single Turkic language often give results that are considerably worse than what our model can achieve,” says Kaisar Dauletbek, a fourth-year NU student and an ISSAI research assistant. “Our model takes advantage of the relatedness and remarkable similarity of the Turkic languages and produces results that would not have been possible had we attempted to solve the task by using the few resources available for some of the languages and creating monolingual models alone. For example, for Bashkir, Kazakh, Tatar, Turkish, Uyghur, and Uzbek, the percentage of errors in characters made by our model is below 5%.”

It is also noteworthy that the multilingual ASR model developed by ISSAI researchers is the first joint model able to recognize Turkic languages and can be freely tested on ISSAI’s website. In addition, all the models developed as well as datasets and codes used in the research project are publicly available for download on the same website. “One of the fundamental ethical principles that ISSAI has always adhered to is the principle of transparency,” says Rustem Yeshpanov, ISSAI technical writer. “Simply put, every research activity and project that the Institute undertakes is open to public scrutiny through the publication of the protocols and the results obtained. We deem it imperative that we disclose the design, methods, findings, limitations, and risks of any study carried out within the walls of ISSAI and thus make all the resources used in the development of the multilingual ASR model accessible to anyone ranging from speech processing enthusiasts to local developers and entrepreneurs to international businesses.”

ISSAI’s interest in developing a multilingual ASR model for Turkic languages is not accidental. So far, ISSAI has already achieved well-deserved success in creating the first open-source Kazakh speech corpora (KSC and KSC2), large-scale open-source Kazakh text-to-speech corpora (KazakhTTS and KazakhTTS2), as well as the largest publicly available Kazakh named entity recognition dataset (KazNERD). “Since its foundation in 2019, the Institute has put constant and considerable effort into promoting the Kazakh language in the digital world,” says Prof. Huseyin Atakan Varol, ISSAI Founding Director. “However, the Institute’s interest in language and speech technologies is not limited to Kazakh, but also extends to other Turkic languages. In this way, our Institute will emerge as one of the scientific centers for artificial intelligence and data science in the Turkic world and Eurasia.” Prof. Varol continues, “We believe that the most important outcome of these projects is the training of highly-qualified technical experts who will not only drive the technological development of Kazakhstan, but also willingly share and apply their professional knowledge and know-how to contribute to the advancement of technologies in other countries, thus creating a better world for future generations.”