All applicants must carefully review the description of each track and determine which two tracks they wish to select.

In this research direction participants can follow two sub-tracks:

1) DESIGN sub-track

In the design sub-track, participants will work with the Photorealistic Avatar System “Aibike” being deployed in the Unreal Engine platform. Here participants will work on the design of the custom clothes for Photorealistic Avatar Aibike in Blender, create the animations for the avatar in Cascadeur and Motion Capture System Optitrack, design realistic lighting in the virtual classroom, and implement the camera control in Unreal Engine scene.

2) DEPLOYMENT sub-track

In the deployment sub-track, participants will build a modern customized conversational avatar using Avaturn platforms (or other up-to-date alternative) and integrate it into a full speech-to-speech pipeline. The work will cover two deployment scenarios: cloud-based (fast prototyping with external APIs) and local/offline (running the full stack on our infrastructure). Avatar creation with Avaturn – Participants will create and customize a lightweight avatar (appearance, outfit variations if needed, export formats, basic rigging/animation-ready setup) using current avatar creation ecosystems and pipelines suitable for real-time applications. Implement the cloud-based and local deployments of the created lightweight avatar system composed of the following systems – ASR → LLM → TTS pipeline, real-time avatar interaction loop and lip-sync. Personal RAG for specialization – Each participant will create a personalized RAG layer using their own data (documents/notes/domain materials). The avatar will be able to answer more accurately using that knowledge base, becoming specialized in a chosen topic (e.g., a personal assistant, domain tutor, project consultant). This includes data preparation, indexing, retrieval configuration, and evaluation of grounded responses.

By the end, participants will have a working conversational avatar in two modes (cloud and local), with speech interaction, lip-sync, and personalized RAG, plus a clear understanding of deployment trade-offs (latency, cost, quality, and robustness).

Mentors: Zhanat Makhataeva, Galammadin Askar, Auyeskhan Alibekov

This Track includes 2 projects, you can choose one of them after the Program starts.

Project 1: Analyzing Agentic Performance in Dynamic Sports Environments

Description: This project focuses on the implementation and testing of AI agents within real-time sports environments. Rather than focusing solely on forecasting, the research investigates how agents interact with live game dynamics—including player movement, tactical shifts, and situational changes. Participants will evaluate the ability of both open-source and proprietary LLMs to process constant streams of data and maintain a coherent “state” of the environment. The study aims to measure the latency and accuracy of agent decisions when faced with the fast-paced, non-linear nature of a competitive match, determining how effectively current models can assist in real-time technical analysis.

Project 2: Comparative Analysis of LLM Capabilities in Research Operations

Description: This project explores the practical performance of LLMs when performing standard research operations. The goal is to conduct a direct comparison between various models to see how they handle the everyday tasks required in a research setting, such as organizing information, identifying specific patterns in data, and reviewing technical documentation. The research focuses on the consistency and logic of the models. Students will test how agents from different providers (open and closed) perform when tasked with evaluating technical content or navigating complex instructions. By documenting where these models succeed or fail in these operational tasks, the project provides a local baseline for which LLMs are most reliable for assisting in structured research workflows.

Mentors: Akylbek Maxutov

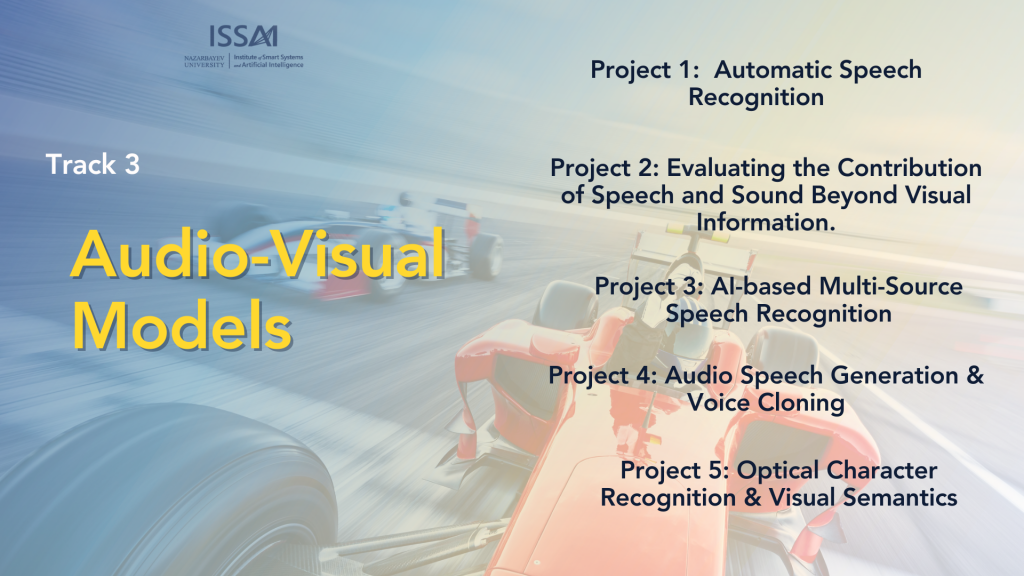

Audio-Visual Models (This Track includes 5 projects, you can choose one of them after the Program starts)

Project 1: Automatic Speech Recognition (ASR)

This project gives students a hands-on introduction to building modern automatic speech recognition (ASR) systems—from collecting and preparing speech data, to training an ASR model, to assembling a complete end-to-end pipeline. Students will experiment with model behavior and customize it for specific use cases, learning the practical trade-offs that matter in real deployments.

Project 2: Evaluating the Contribution of Speech and Sound Beyond Visual Information

Human understanding of video content relies on both what is seen and what is heard. In real-world scenarios (lectures, tutorials, conversations, and instructional videos) speech and audio data often carry essential semantic information that is not explicitly visible in the video frames. However, many existing video understanding systems rely primarily on visual information, treating audio as auxiliary or ignoring it entirely. In this project, the students will study and develop multimodal machine learning models that jointly process audio and video information to better understand spoken and visual content in videos. They will analyze model behavior, identify cases where audio information improves performance, and examine failure modes of unimodal systems.

Project 3: AI-based Multi-Source Speech Recognition

People use speech all the time, but it’s still not that easy for computers to handle it reliably. The same sentence can sound very different depending on who is speaking, where they are, and how good the recording is, so automatic speech systems don’t always work consistently.

In this SRP project, we want to build a general AI pipeline that takes spoken input and turns it into a clear, useful result. We will also look at whether bringing in additional sources of information can make speech recognition stronger, especially when the audio alone is messy. The main idea is to see what extra signals actually help, how to combine them in a practical way, and what we gain or lose as the system becomes more complex.

Project 4: Audio Speech Generation & Voice Cloning

This project will have the practical performance of modern Text-to-Speech (TTS) and voice cloning models in real-world audio speech generation tasks for Kazakh language. The goal is to systematically compare multiple TTS systems—across open-source and proprietary models—to evaluate how effectively they convert textual input into natural, intelligible, and expressive speech. The research focuses on speech quality, prosody, speaker similarity, multilingual robustness, and consistency across different languages and accents. By benchmarking model outputs under controlled prompts and documenting strengths and failure cases, the project establishes a practical baseline for identifying which TTS and voice cloning models are most reliable for applications in multilingual content generation, accessibility tools, and conversational AI systems.

Project 5: Optical Character Recognition & Visual Semantics

This project will have the practical performance of modern Optical Character Recognition (OCR) and visual semantic understanding models when applied to real-world document and image analysis tasks. The goal is to compare a range of OCR and vision–language models to evaluate how accurately they extract text and interpret visual structure from complex visual inputs such as scanned documents, forms, diagrams, tables, and natural scene images. The research focuses on text recognition accuracy, layout understanding, semantic consistency, and robustness to noise, varied fonts, and low-quality imagery. Students will test how different models handle tasks such as document parsing, key–value extraction, visual question answering, and semantic alignment between text and visual elements. By analyzing success and failure cases across diverse datasets, the project provides a baseline for assessing which OCR and visual semantic models are most effective for document intelligence, automated data extraction, and multimodal reasoning workflows.

Mentors: Rakhat Meiramov, Tomiris Rakhimzhanova, Adema Sharipova, Anuar Aryngazin, Mamyrbek Parakhat

Image/Video Generation: Applications of Advanced Generative AI: Cultural Image Editing and Interactive Avatars

This research mentorship program introduces students to modern Generative AI, covering text-to-image generation, image editing, and video animation. The program starts with hands-on training in deep learning and diffusion models, focusing on how to generate and fine-tune high-quality images. After this foundation, students as a group will choose one of two specialization tracks based on their interests.

Option A: 2D AI Avatar Pipeline, where students build a system that generates character portraits and animates them using audio-driven lip-syncing. This track explores both high-quality cinematic results and real-time interactive avatars.

Option B: Cultural Image Refinement, where students adapt image models to reflect Kazakh cultural features in image-to-image editing tasks through data collection, fine-tuning, and benchmarking.

Students will work with tools such as diffusion models, LoRA, Wav2Lip, and remote GPU servers. By the end of the program, participants will deliver either a benchmarked cultural image model or a working interactive avatar prototype.

Mentor: Nartay Aikyn

Mobile Apps

Phase 1 (Weeks 1–2): Foundations of Mobile Development

The first phase focuses on building a strong conceptual understanding of how mobile development works. Students will study the differences between native and cross-platform development, how mobile operating systems such as iOS and Android manage applications, and the constraints imposed by mobile hardware, including memory, performance, and battery usage. While React Native will be the main framework discussed in depth, this phase also introduces native development concepts and device-level APIs to help students understand what happens beneath abstraction layers and why certain architectural decisions are necessary.

Phase 2 (Weeks 3–4): Practical Mobile Engineering

In the second phase, students transition from theory to hands-on development by building a simple mobile application, such as a lightweight game or interactive system, without relying on a game engine. The emphasis is on application structure, state management, rendering, user interaction, and debugging. Through this process, students gain a practical understanding of how mobile applications behave in real environments and how performance and design decisions directly affect user experience.

Phase 3 (Weeks 5–6): AI Integration and Comparative Evaluation

The final phase introduces artificial intelligence into the mobile application developed earlier. Students will integrate AI functionality using both on-device inference and server-based inference, allowing them to observe and compare different deployment strategies. This phase emphasizes evaluating latency, responsiveness, resource consumption, and development complexity, encouraging students to analyze the strengths and limitations of each approach. The focus is on understanding real-world trade-offs when deploying AI on mobile platforms rather than optimizing model accuracy alone.

Mentor: Akjan Yerkin, Nail Kamilov

Programming/SWE:

1 week: Master Python by solving 20+ Easy, 15+ Medium, and 5+ Hard LeetCode tasks while completing a Basic Linux (Bash) course and learning Git flow and Docker fundamentals.

2 week: Build and deploy a responsive landing page to understand the basics of web structure, styling, and user interface design.

3 week: Connect to a remote server, configure development environments, and build a functional Backend using FastAPI or Django with integrated database management.

4 week: Set up CI/CD pipelines with GitHub Runners, dive into RAG (Retrieval-Augmented Generation)architecture, and explore vector databases and model API integrations.

5-6 weeks: Integrate with internal APIs (Mangisoz, Oylan, Tilsynk, Beynele) to develop and present a final project, such as an automated book parser and AI-driven rewriter.

Mentors: Nail Kamilov, Sanzhar Sapar, Galammadin Askar

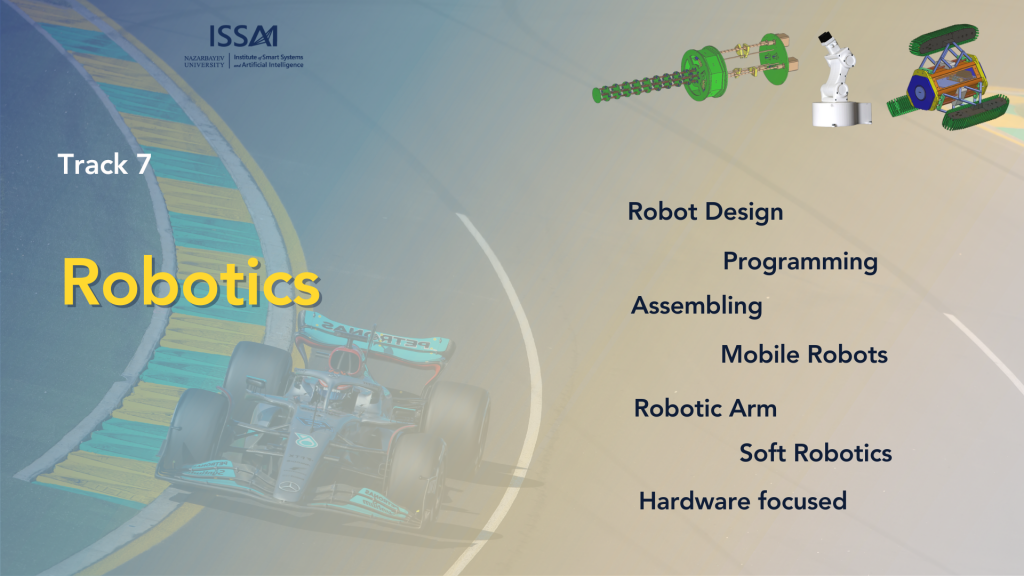

Project1: Join a hands-on summer research program where you’ll learn how real robots are created—from the first sketch to a working machine. Students will work in small teams and build a complete robotic system while exploring how engineering and coding come together in modern robotics.

Robot Design

You’ll design a robot to solve a specific challenge (e.g., line following, object pickup, navigation). Learn the basics of mechanical design, choosing materials, creating simple CAD models, and planning sensors and actuators for your robot’s purpose.

Programming

Bring your robot to life through code. You’ll program microcontrollers or single-board computers to control motors, read sensors, and make decisions. Topics include motion control, sensor calibration, debugging, and writing clean, reliable code for real-world behavior.

Assembling

Build and test your robot step-by-step. Students will assemble mechanical parts, wire electronics safely, integrate sensors, and troubleshoot issues like loose connections or unstable motion. By the end, your team will demonstrate a working robot and present what you learned like a real research group.

Mobile Robots

Explore how robots move and navigate in the real world. Students will design and build a wheeled robot, integrate sensors (e.g., distance, line, IMU), and program behaviors such as obstacle avoidance, line following, and basic mapping. You’ll learn core concepts like locomotion, motor control, feedback, and reliable autonomous navigation through testing and iteration.

Robotic Arm

Learn how robotic manipulators pick, place, and interact with objects. Students will assemble a multi-joint robotic arm, program precise movements, and control a gripper for simple tasks like sorting or stacking. Topics include kinematics basics, calibration, motion planning, and safety—plus plenty of hands-on practice tuning accuracy and repeatability.Soft Robotics

Discover robots made from flexible materials inspired by nature. Students will design and fabricate soft actuators (e.g., pneumatic or tendon-driven), build simple molds, and test how shape and material affect motion. You’ll explore gentle gripping, compliant movement, and how soft robots can handle delicate objects—then showcase a working soft mechanism and explain the engineering behind it.

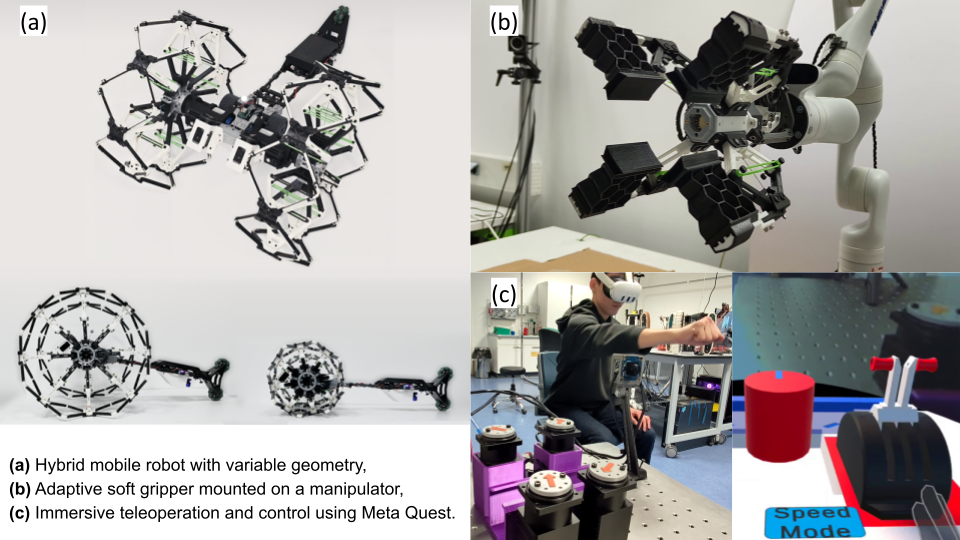

Project 2: Robotics & Intelligent Systems (Hardware-Focused Track):

In the Robotics track of the SRP program, students will engage in hands-on, hardware-centered projects involving the design, construction, and control of real robotic systems. The work will focus on basic to mid-level robotics concepts, making it suitable for students who are new to hardware as well as those with some prior experience. Interns will build adaptive grippers, hybrid mobile robots with variable configurations, and drone platforms. Based on individual interests, participants will work on mechanical assembly, electronics integration, sensing, and real-time control. The projects also include teleoperation of mobile robots using Meta Quest for immersive human–robot interaction, emphasizing practical learning with real robots.

Mentors: Azamat Yeshmukhametov, Gourav Moger

Core idea: Reproduce Genie/GameNGen approach on a simple 2D Kazakh-themed game — train a diffusion model that predicts next frame given action input.

Why feasible for SRP:

Mentor: Vladimir Albrekht