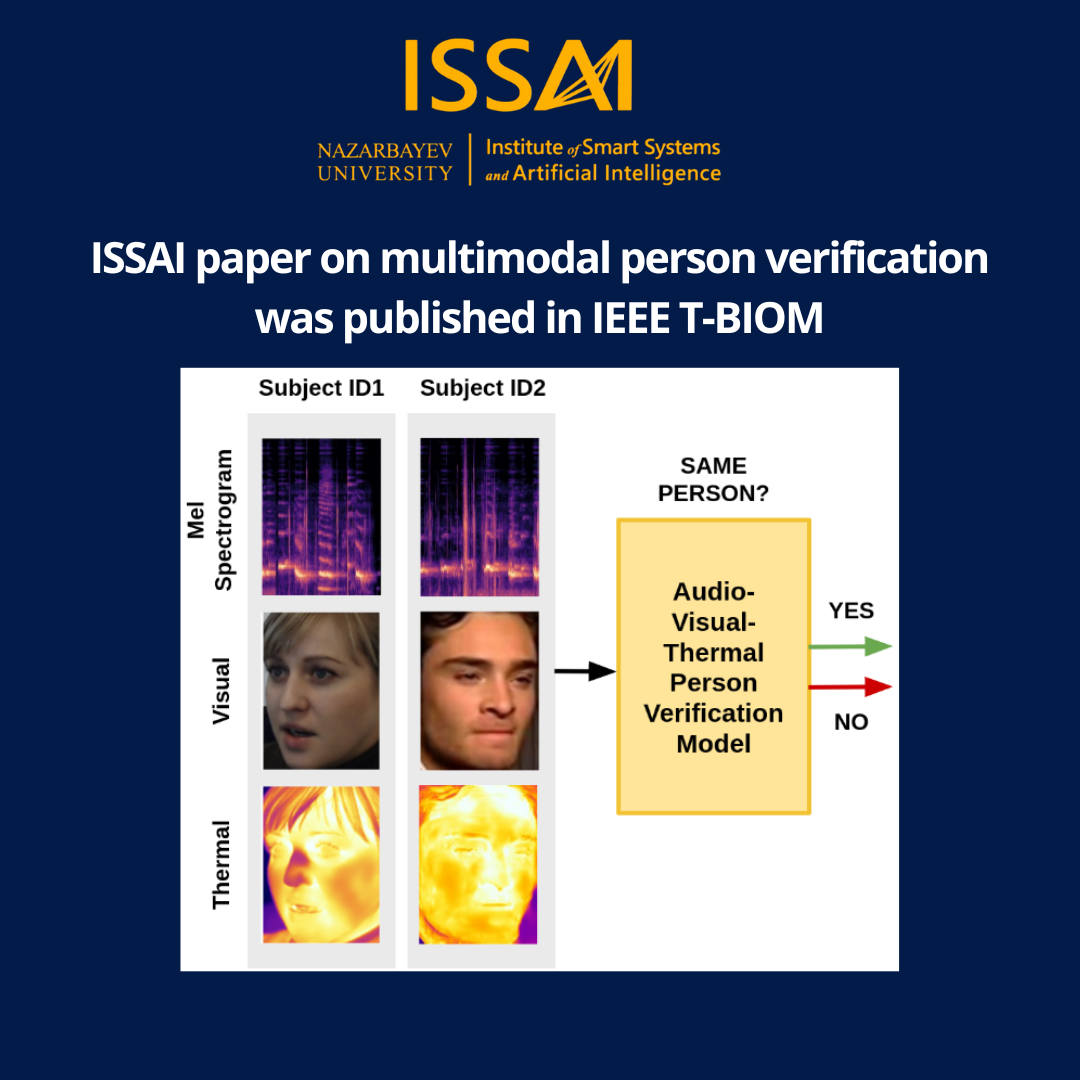

ISSAI researchers published a groundbreaking paper in the prestigious journal, IEEE Transactions on Biometrics, Behavior, and Identity Science. The paper, written by ISSAI data scientist Madina Abdrakhmanova, researcher Timur Unaspekov, and founding director Atakan Varol, explores how the fusion of audio, visual, and thermal modalities can be used to improve the performance of person verification systems. Specifically, researchers used domain transfer methods to augment their training data, merging real audio-visual data from the SpeakingFaces dataset with synthetic thermal data from the VoxCeleb dataset.

The team found that by using a score fusion of unimodal audio, unimodal visual, bimodal, and trimodal systems trained on the combined data, they achieved the best results on both the SpeakingFaces and VoxCeleb datasets. This approach also showed substantial resilience under challenging conditions, including low illumination and high noise levels.

The publication is expected to encourage further exploration into the use of synthetic data produced by generative methods to enhance the performance of deep learning models. As a commitment to fostering ongoing research in the field of multimodal person verification, the ISSAI researchers have made their code, pretrained models, and preprocessed dataset freely accessible in their GitHub repository.